Here is a list of services that invoke Lambda functions asynchronously:

- Amazon Simple Storage Service.

- Amazon Simple Notification Service.

- Amazon Simple Email Service.

- AWS CloudFormation.

- Amazon CloudWatch Logs.

- Amazon CloudWatch Events.

- AWS CodeCommit.

- AWS Config.

Another example is: Lambda depends on Amazon EC2 to provide Elastic Network Interface for your VPC-enabled Lambda function. Consequently, your function's scalability depends on EC2's rate limits as they scale.

AWS Lambda is a serverless compute service that runs your code in response to events and automatically manages the underlying compute resources for you. You can use AWS Lambda to extend other AWS services with custom logic, or create your own back-end services that operate at AWS scale, performance, and security.

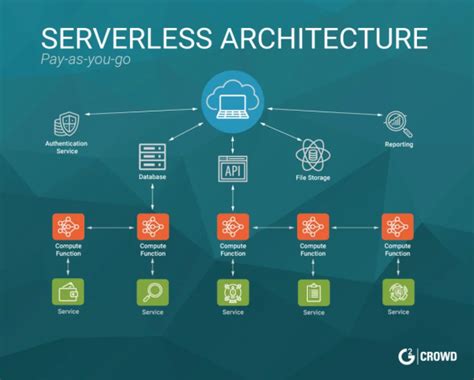

Serverless computing is a cloud computing execution model in which the cloud provider allocates machine resources on demand, taking care of the servers on behalf of their customers. Pricing is based on the actual amount of resources consumed by an application. It can be a form of utility computing.

The Serverless Framework is a free and open-source web framework written using Node. js. Serverless is the first framework developed for building applications on AWS Lambda, a serverless computing platform provided by Amazon as a part of Amazon Web Services.

Get started with AWS Lambda. AWS Lambda is a serverless compute service that lets you run code without provisioning or managing servers, creating workload-aware cluster scaling logic, maintaining event integrations, or managing runtimes.

AWS — Serverless services on AWS

- AWS Lambda. AWS Lambda lets you run code without provisioning or managing servers.

- Amazon API Gateway.

- Amazon DynamoDB.

- Amazon S3.

- Amazon Kinesis.

- Amazon Aurora.

- AWS Fargate.

- Amazon SNS.

Serverless is a cloud computing execution model where the cloud provider dynamically manages the allocation and provisioning of servers. A serverless application runs in stateless compute containers that are event-triggered, ephemeral (may last for one invocation), and fully managed by the cloud provider.

Square Enix uses AWS Lambda to run image processing for its Massively Multiplayer Online Role-Playing Game (MMORPG). With AWS Lambda, Square Enix was able to reliably handle spikes of up to 30 times normal traffic.

DynamoDB is aligned with the values of Serverless applications: automatic scaling according to your application load, pay-per-what-you-use pricing, easy to get started with, and no servers to manage. This makes DynamoDB a very popular choice for Serverless applications running in AWS.

Lambda architecture is a data-processing design pattern to handle massive quantities of data and integrate batch and real-time processing within a single framework. (Lambda architecture is distinct from and should not be confused with the AWS Lambda compute service.)

Though you can use the Kinesis Client Library (KCL) to run your own custom processing application on persistent virtual machines or container instances, AWS Lambda offers serverless computing with native event source integration with Amazon Kinesis Data Streams.

Lambda is a compute service that lets you run code without provisioning or managing servers. Lambda runs your function only when needed and scales automatically, from a few requests per day to thousands per second. You pay only for the compute time that you consume—there is no charge when your code is not running.

AWS Lambda automatically scales your application by running code in response to each trigger. Your code runs in parallel and processes each trigger individually, scaling precisely with the size of the workload. AWS defines concurrency as the number of executions your function code are occurring at any given time.

AWS Elastic Beanstalk can be classified as a tool in the "Platform as a Service" category, while AWS Lambda is grouped under "Serverless / Task Processing". There is no additional charge for Elastic Beanstalk - you pay only for the AWS resources needed to store and run your applications.

Deploying Lambdas with Tekton

- Define a GitHub repository that contains our function code as a PipelineResource object.

- Create a Task object that will run serverless deploy .

- Execute the Task by creating a TaskRun object referencing the Task and the PipelineResource as input.

Serverless computing is a method of providing backend services on an as-used basis. Servers are still used, but a company that gets backend services from a serverless vendor is charged based on usage, not a fixed amount of bandwidth or number of servers.

Reusability. Serverless components have been described as cloud legos. You can construct use-cases out of these cloud legos and then when you've built your use-case. Then pick that use-case up and place it into more robust use-cases or share that use-case internally or externally with the world.

Testing performanceI consistently found that the serverless setup was 15% slower. (Also, if you think it's slow altogether, I am running this from Iceland, so there's some latency involved).

- user guide. intro. quick start. create token. credentials. serverless.yml. endpoint set up. variables. cors. active versions. iam role.

- CLI reference. config credentials. create. deploy. deploy function. invoke. logs. stage variables. info. remove. plugin list. plugin install.

- events. api gateway. schedule.

- examples. java8. javascript. python. ruby.

The bad is that these functions can get complicated and hard to manage, especially if they must run for more than five minutes at a time in an application process. They must also be accessed by a private API gateway and will require the dependencies from common libraries to be packaged into them.

Yet while serverless computing can be advantageous for some use cases, there are plenty of good reasons to consider not using it.

- Your Workloads are Constant.

- You Fear Vendor Lock-In.

- You Need Advanced Monitoring.

- You Have Long-Running Functions.

- You Use an Unsupported Language.

The AWS Serverless Application Model (SAM) is an abstraction layer in front of CloudFormation that makes it easy to write serverless applications in AWS. There is support for three different resource types: Lambda, DynamoDB and API Gateway.

While using the serverless stack can offer substantial savings, it doesn't guarantee cheaper IT operations for all types of workloads. At times, it may even be more expensive compared to server deployments, particularly at scale.

Heroku. Heroku is a service for managing stateless web application using the 12 Factor App approach that they pioneered. It has similarities to serverless applications in that much of work of managing and maintaining servers is done for you.

Serverless simply means that you don't have to manage the servers on which your application runs. You don't have to take care of patching the system, installing antivirus software or configuring firewalls. Also, you don't have to worry about scaling your application as the load increases, it is handled automatically!

Log in to your AWS Account, and navigate to the Lambda console. Click on Create function. We'll be creating a Lambda from scratch, so select the Author from scratch option. Enter an appropriate name for your Lambda function, select a Python runtime and define a role for your Lambda to use.

To deploy your function's code, you upload the deployment package from Amazon Simple Storage Service (Amazon S3) or your local machine. You can upload a . zip file as your deployment package using the Lambda console, AWS Command Line Interface (AWS CLI), or to an Amazon Simple Storage Service (Amazon S3) bucket.

Use cases of serverless architecturesWe introduce how you can use cloud services like AWS Lambda, Amazon API Gateway, and Amazon DynamoDB to implement serverless architectural patterns that reduce the operational complexity of running and managing applications.

ans: The cloud provider is responsible for setting up the environment Which is not a feature of a serverless framework? ans: provider dependent Serverless Architecture never really has a server anywhere.