Concretely, an eigenvector with eigenvalue 0 is a nonzero vector v such that Av = 0 v , i.e., such that Av = 0. These are exactly the nonzero vectors in the null space of A .

The scalar value λ is called the eigenvalue. Note that it is always true that A0 = λ · 0 for any λ. This is why we make the distinction than an eigenvector must be a nonzero vector, and an eigenvalue must correspond to a nonzero vector. However, the scalar value λ can be any real or complex number, including 0.

Solution: A basis for the eigenspace would be a linearly independent. set of vectors that solve (A10I2)v = 0; that is, null space basis vectors. for matrix (A 10I2).

A vector v for which this equation hold is called an eigenvector of the matrix A and the associated constant k is called the eigenvalue (or characteristic value) of the vector v. If a matrix has more than one eigenvector the associated eigenvalues can be different for the different eigenvectors.

Yes it can be. As we know the determinant of a matrix is equal to the products of all eigenvalues. So, if one or more eigenvalues are zero then the determinant is zero and that is a singular matrix. Geometrically, zero eigenvalue means no information in an axis.

Both the null space and the eigenspace are defined to be "the set of all eigenvectors and the zero vector". They have the same definition and are thus the same.

Recall that v is an eigenvector of A if Av=λv for some λ. But is [129] a scalar multiple of [14]? AX=λX. If you multiply and find that you get a multiple of the original vector, then the eigenvalue is the multiple.

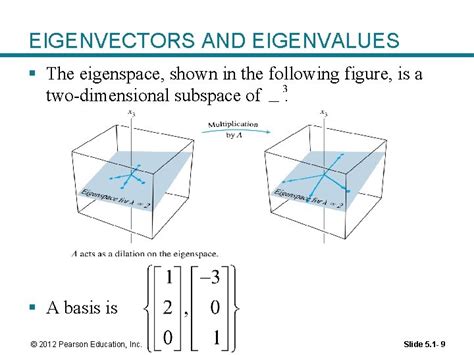

The set of all eigenvectors of T corresponding to the same eigenvalue, together with the zero vector, is called an eigenspace, or the characteristic space of T associated with that eigenvalue. If a set of eigenvectors of T forms a basis of the domain of T, then this basis is called an eigenbasis.

A is diagonalizable. The sum of the geometric multiplicities of the eigenvalues of A is equal to n . The sum of the algebraic multiplicities of the eigenvalues of A is equal to n , and for each eigenvalue, the geometric multiplicity equals the algebraic multiplicity.

Eigenvalue analysis is also used in the design of the car stereo systems, where it helps to reproduce the vibration of the car due to the music. 4. Electrical Engineering: The application of eigenvalues and eigenvectors is useful for decoupling three-phase systems through symmetrical component transformation.

Definition 1. For a given linear operator T : V → V , a nonzero vector x and a constant scalar λ are called an eigenvector and its eigenvalue, respec- tively, when T(x) = λx. Therefore x + cy is also a λ-eigenvector. Thus, the set of λ-eigenvectors form a subspace of Fn.

If A is invertible A−1 is also invertible, so they both have full rank (equal to n if both are n × n). and is not invertible. (e) The sum of two diagonalizable matrices must be diagonalizable.

If the eigenvalues are distinct then the eigenspaces are all one dimensional. Thus x and Bx are both eigenvectors of A, sharing the same λ. Assume that the eigenvalues of A are distinct (it means the eigenspaces are all one dimensional) then Bx must be a multiple of x.

To find the null space of a matrix, reduce it to echelon form as described earlier. To refresh your memory, the first nonzero elements in the rows of the echelon form are the pivots. Solve the homogeneous system by back substitution as also described earlier. To refresh your memory, you solve for the pivot variables.

The only eigenvalue is 0 and its algebraic multiplicity is 2. To find the geometric multiplicity, we compute dim of kernel of A−0I2, or the dimension of kerA, which is 1 by the rank-nullity theorem. So the geometric multiplicity of 0 is 1, which means there is only ONE linearly independent vector of eigenvalue 0.